Ward Gauderis

Hello! I’m an FWO PhD Fellow in Brussels, supervised by Prof. Geraint Wiggins, studying compositionality as a mathematical foundation for deep learning. I use tensor networks and category theory to study neural representations as induced by the computations encoded in a model’s weight structure.

Rather than reading tea leaves in activation space, I treat the model’s weights as a formal compositional system, so emergent behaviour becomes a direct function of its algebraic wiring. This enables a divide-and-conquer approach that naturally links local mechanisms to global properties.

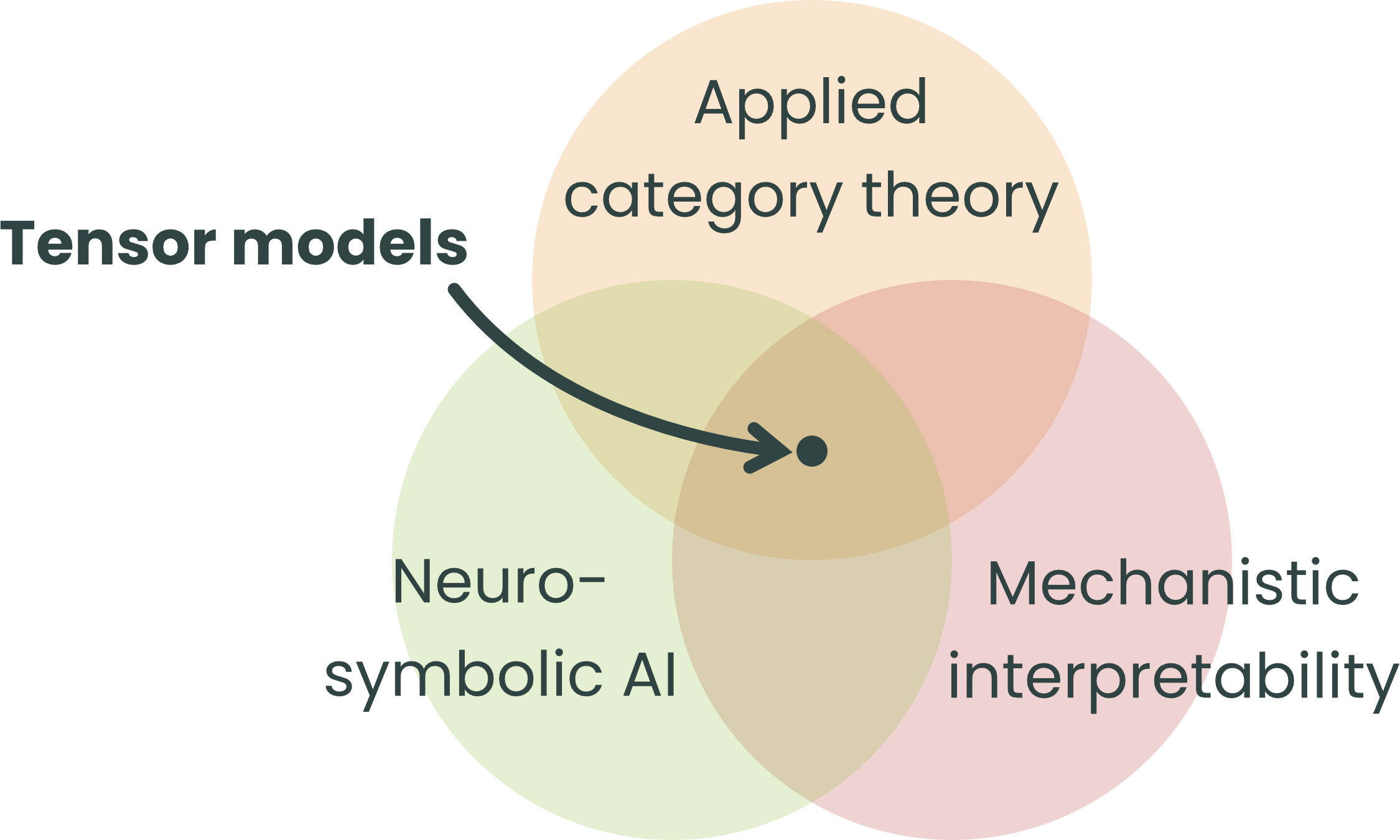

My work proposes tensor models to bridge neuro-symbolic and mechanistic analysis, aiming to understand how models compose concepts to generalise… or not.

Q-CHARM

How can compositional design improve compositional behaviour?

My FWO-funded PhD project, Q-CHARM, takes this question seriously by distinguishing between a model’s architecture (its compositional design) and the structure that emerges during learning (its compositional behaviour). The relationship between the two is less obvious than it looks: deep networks cannot efficiently learn compositional functions from data alone, so what you build in shapes what you can hope to get out.

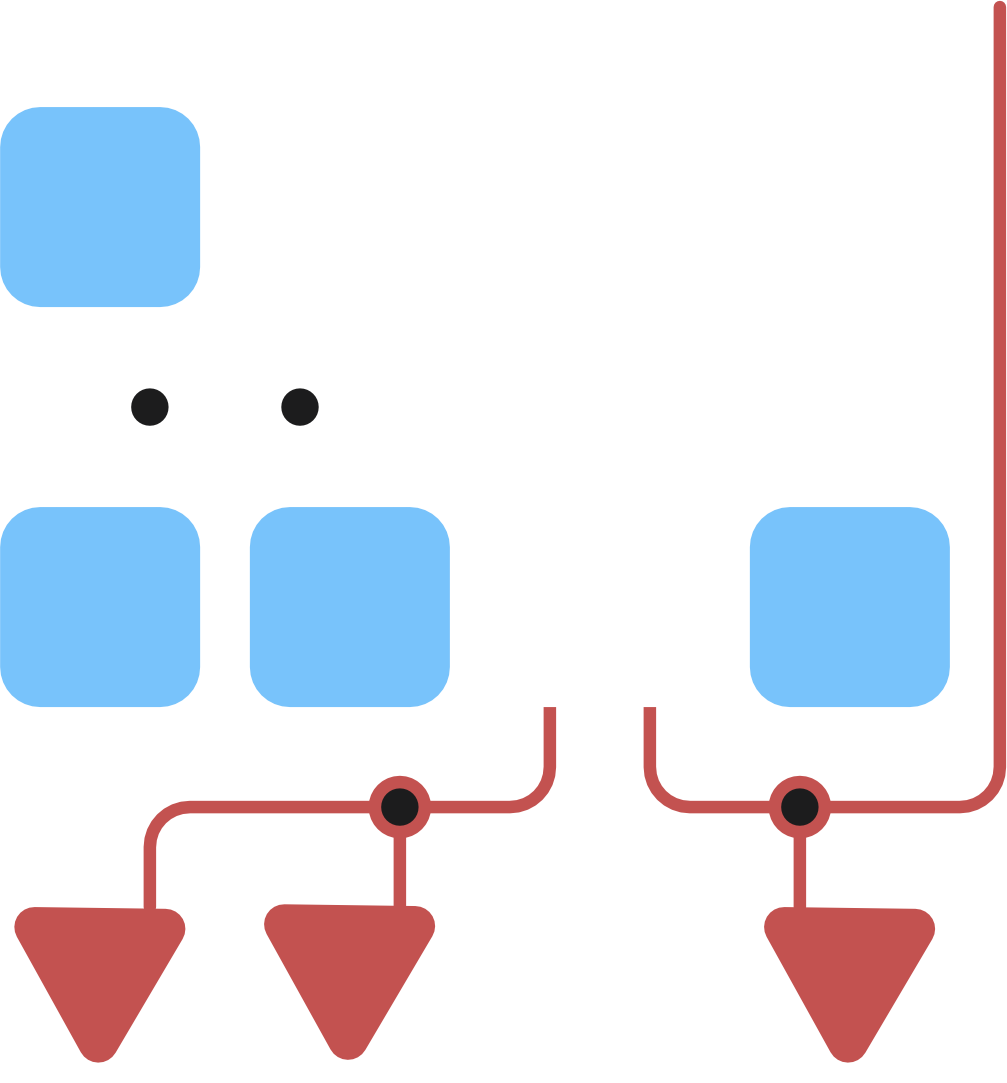

I bridge two complementary paths: imposing explicit structure before training (neuro-symbolic design) and exposing implicit structure after training (mechanistic interpretability). Embedding domain structure is essential to guide learning toward representations that align with human understanding and generalise well.

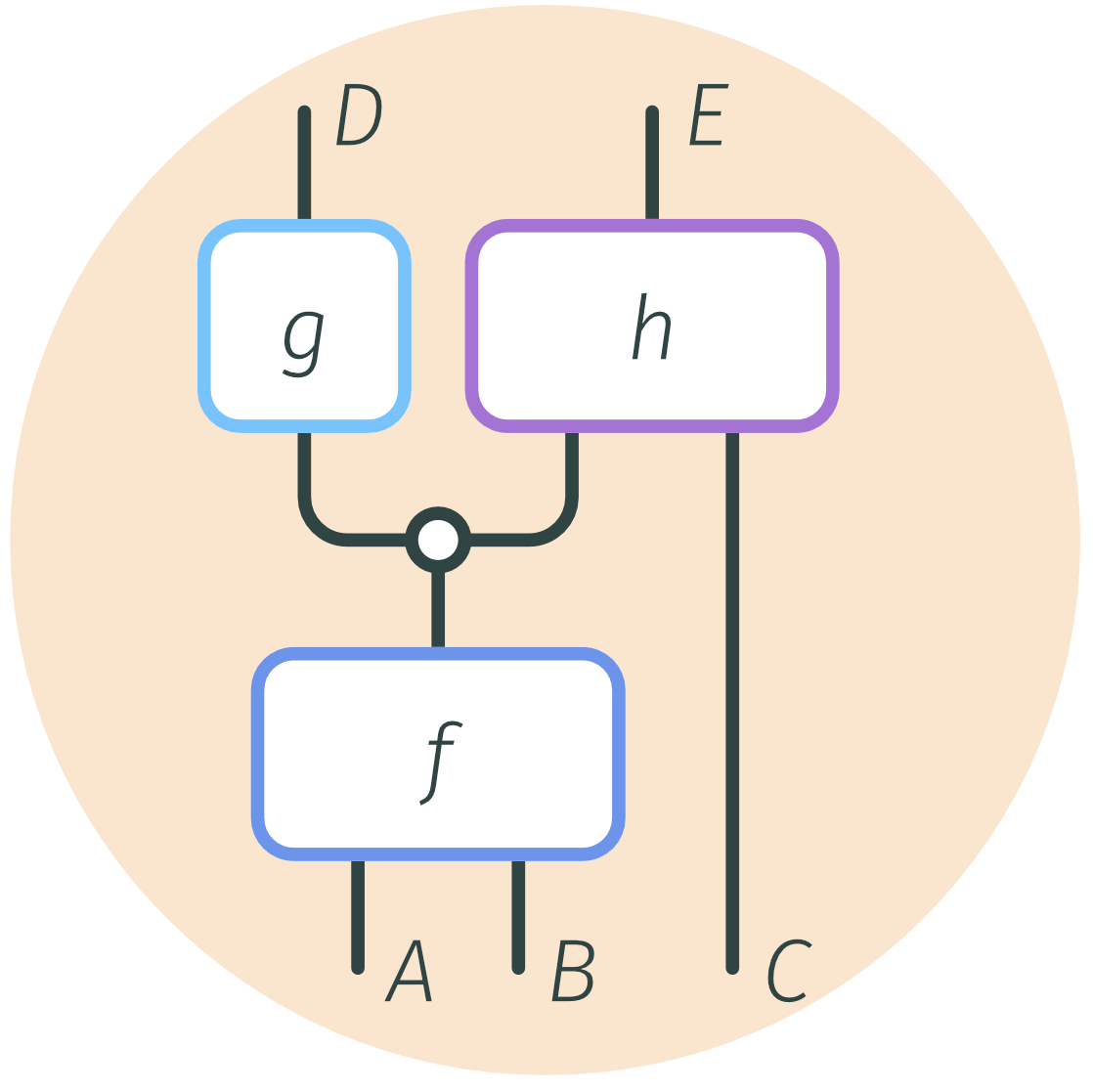

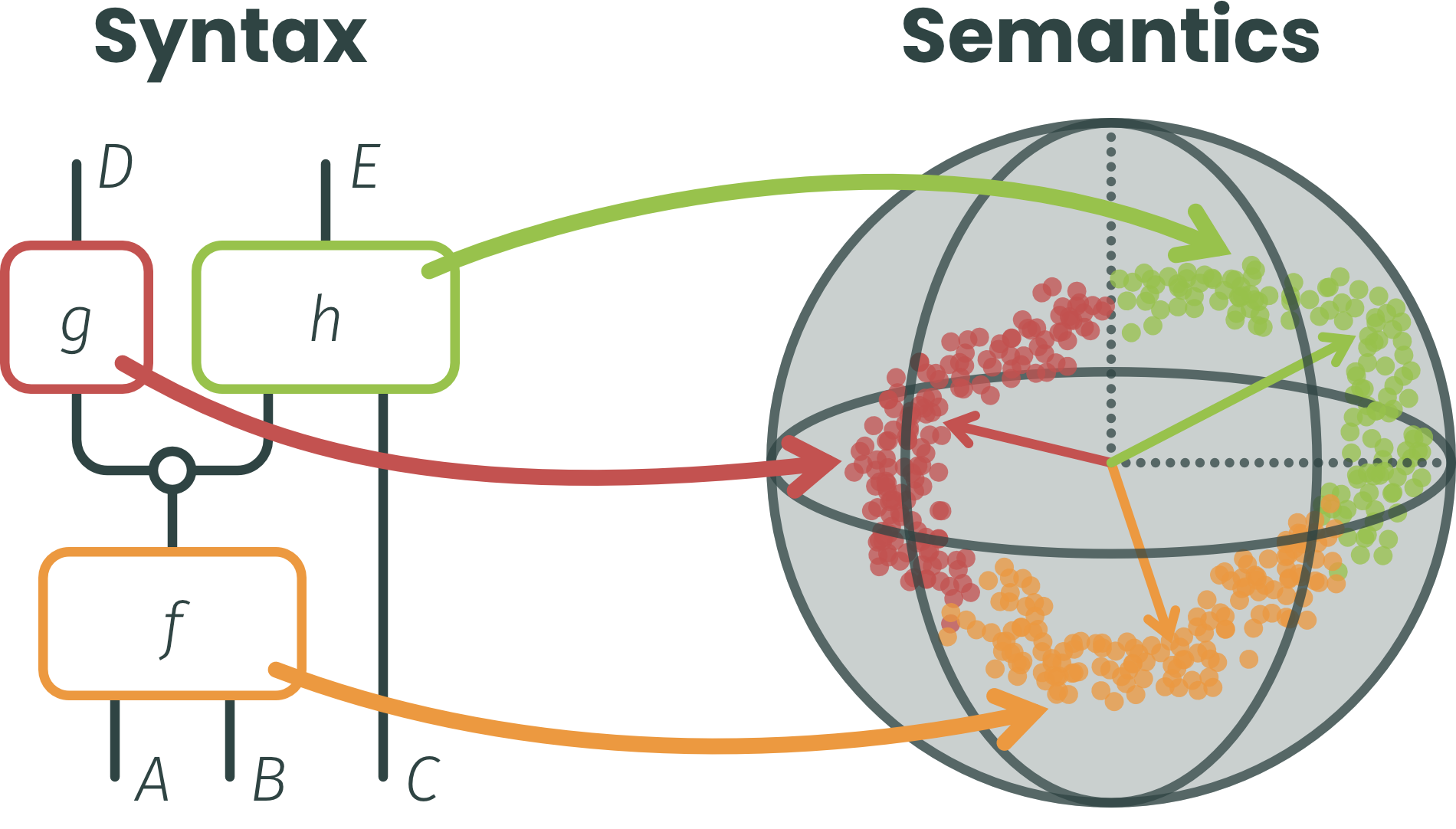

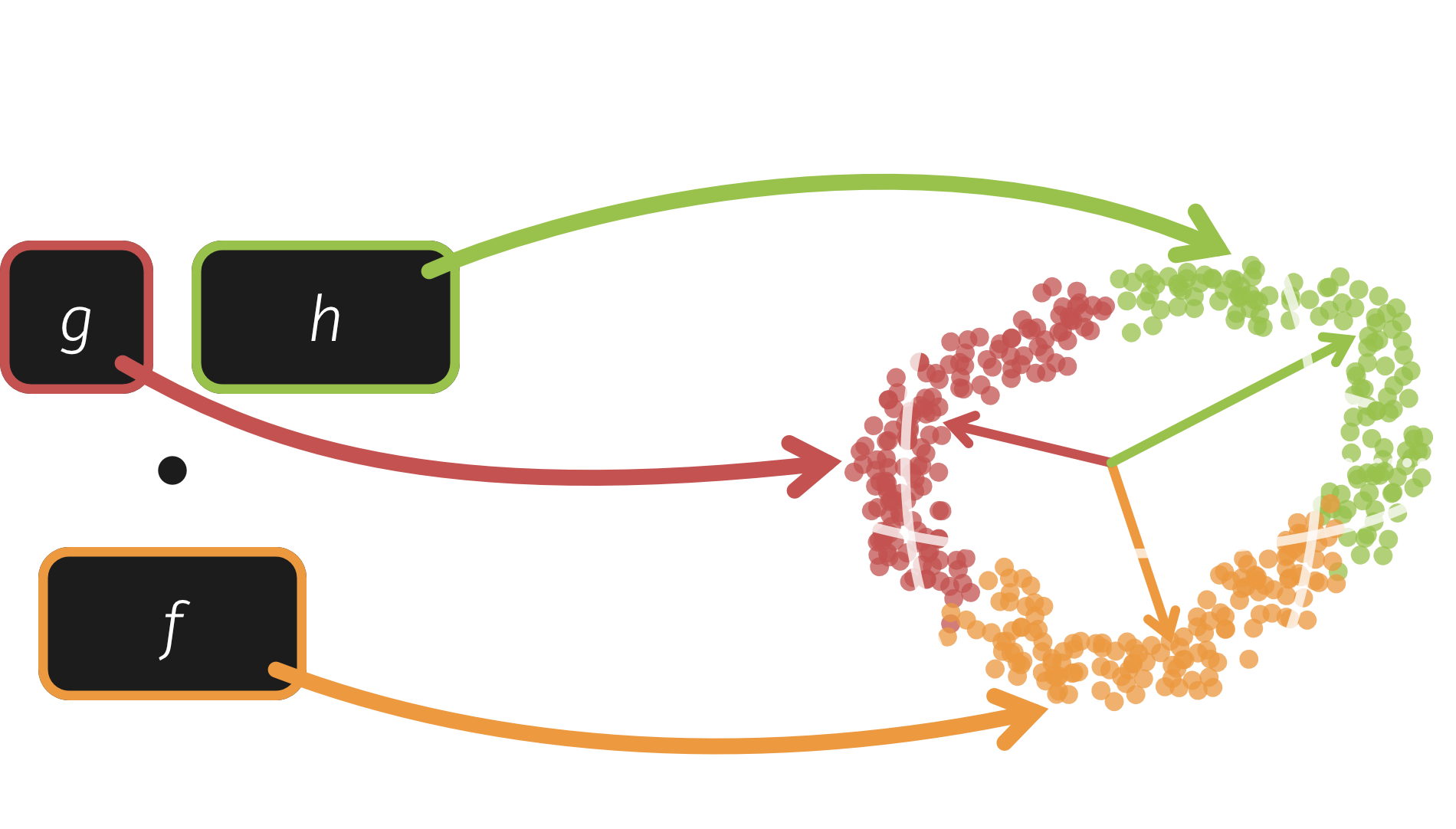

To formalise this, I use string diagrams (life is too short for indices) to cast models as rigorous mathematical objects, cleanly separating high-level Syntax (symbolic rules and structure) from low-level Semantics (subsymbolic representations). As a devout Yoneda disciple, I believe this categorical grounding is the only way to formally reason about behaviour beyond the training set.

The practical blueprint for this vision lies in tensor models. They unify the expressivity of neural networks with the tractability of tensor networks. Because their weights possess a well-understood geometry, we can perform tractable analysis both pre- and post-training. While central to applied category theory and neuro-symbolic AI, they are rarely combined with interpretability; my work unites these perspectives.

Compositional Interpretability

Current mechanistic interpretability lacks formal foundations, relying on post-hoc activation heuristics that often assume a layer-by-layer stratification. This makes it nearly impossible to tell if a feature is causally useful globally or just a local artifact.

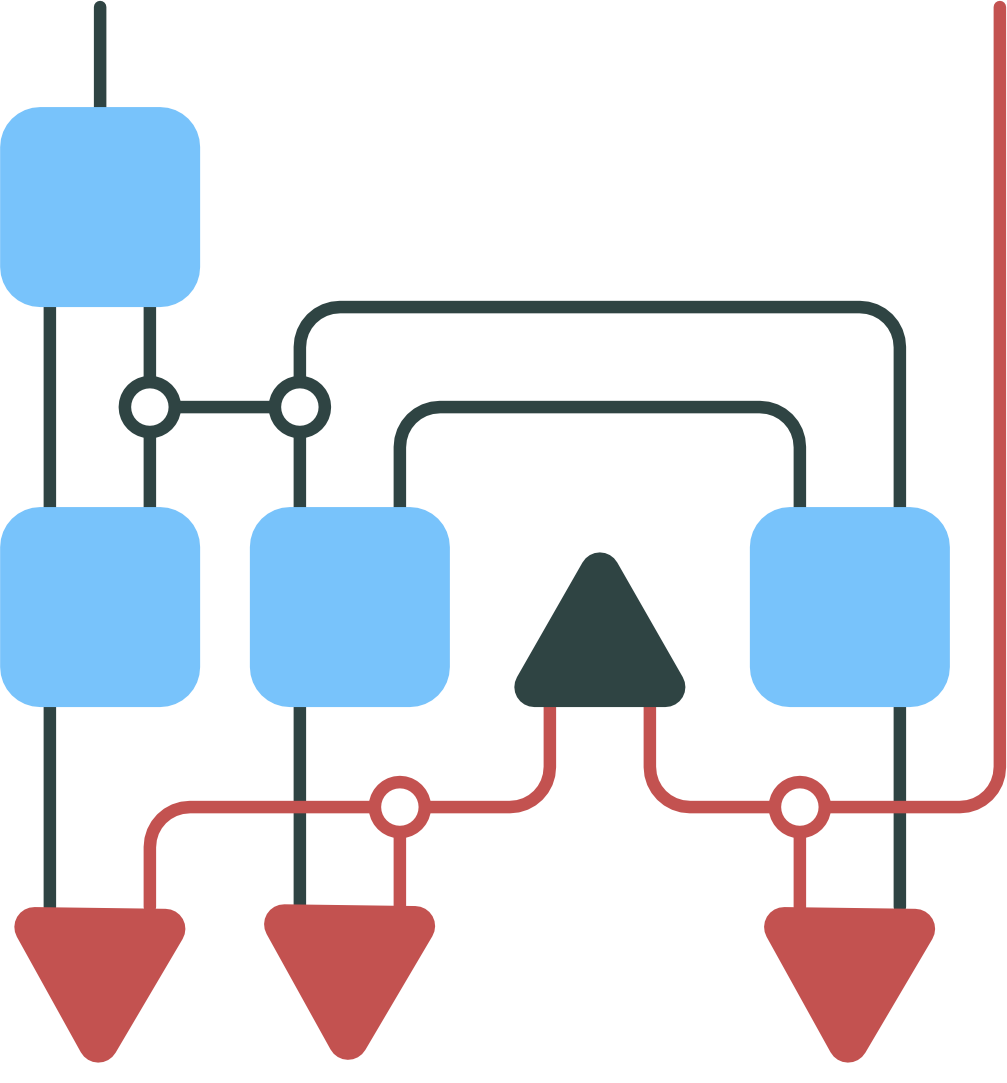

The CompInterp framework shifts the focus from isolated features to their interactions as first-class citizens. To achieve measurable interpretability, we ground our analysis in formal decompositions rather than data-dependent heuristics:

- Unified Algebra: By formulating weights, data, and subcircuit interactions via tensor contraction, standard matrix decompositions (SVD, ICA, etc.) naturally lift to complex architectures. Since the result remains a tensor model, we can trace discovered mechanisms back to the full architecture.

- Weight-Based Analysis: Tensor networks capture higher-order relations between representation spaces. Analysing their polynomial coefficients directly in the weight geometry means our conclusions don’t depend on the training distribution unless we want them to.

- Disentangling interactions: To filter out spurious correlations, decompositions must balance complexity and faithfulness. Because these properties are themselves compositional, they propagate cleanly through the model.

Research Interests

If you want my full attention, just mention any of these…

- Compositionality in AI: Category theory, string diagrams, geometric deep learning

- Mechanistic Interpretability: Weight space analysis, parameter decompositions

- Neuro-symbolic Architectures: Probabilistic circuits, tensor logic

- Quantum-ish Mathematics: Tensor networks, Hilbert spaces, information geometry

- Effective Theories of DL: Renormalisation, algebraic geometry, stochastic complexity

- Models of Cognition & Creativity: Active inference, conceptual spaces

Hobbies

When I’m not agonising about model structure, I’m probably skating through the city, singing and playing piano, or falling down a philomathematical rabbit hole. I also love building FOSS, playing chess or other (board) games, and conversations that stretch the brain a little.

news

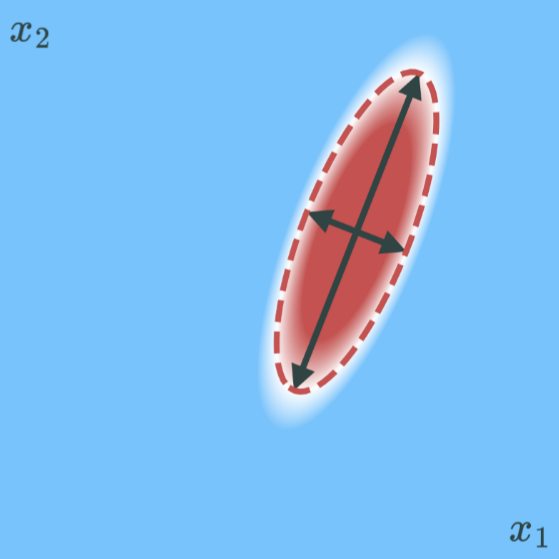

| May 12, 2026 | Our work on finding manifolds got a plenary pitch at the Flanders AI Research Day! |

|---|---|

| Mar 29, 2026 | I am mentoring for MARS V (Mentorship for Alignment Research Students)! |

| Dec 07, 2025 | Our work on bilinear autoencoders got a spotlight at the Mechanistic interpretability workshop (NeurIPS 2025)! |

| Nov 01, 2025 | I have been awarded a PhD Fellowship fundamental research from FWO to study the role of compositionality in deep learning! |

| Oct 15, 2025 | Come check out our deep dive on compositional interpretability at the Flanders AI Research Day 2025! |